The first component of ThrustDB I’m delivering is the custom Thread Pool connection handler, named Thread Pool Hybrid (get it here). It’s Linux only, uses no dynamic memory allocation (though epoll_ctl ADD uses red black trees), and can handle a very high number of clients with very little slowdown. And can be used with any modern MySQL system

When compared to the default connection-per-thread handler that ships with MySQL community edition, Thread Pool Hybrid allows up to 10x or more active clients with little slow down in throughput. It uses far less memory per connection (connection-per-thread requires a dedicated thread w/ runtime stack, using memory and resources that can better be used by a smaller number of executing threads.)

However, I’ve discovered one of the disadvantages of the using epoll is an inherent latency when the thread count is relatively low, again reducing throughput.

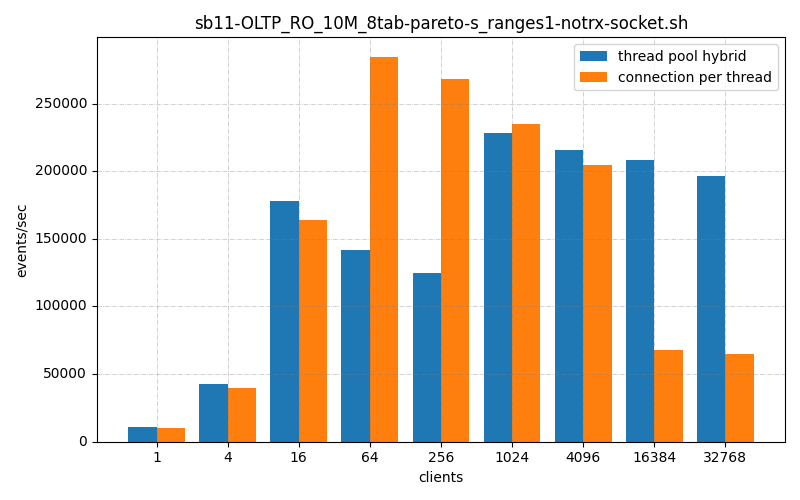

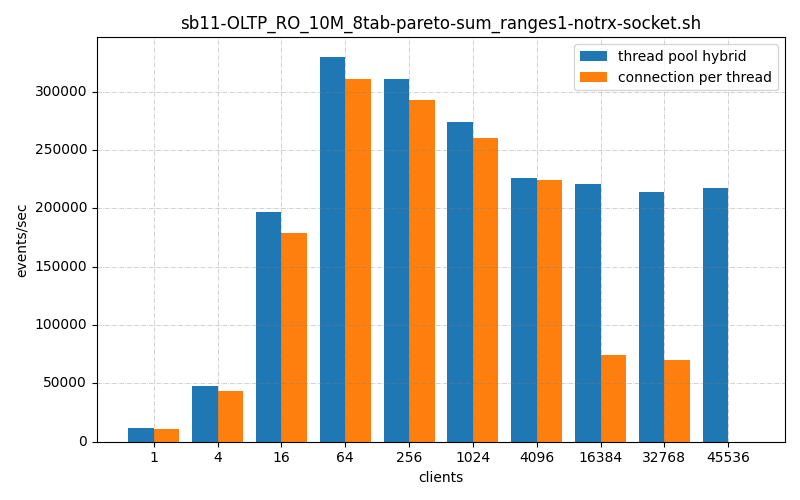

I benchmarked the performance of vanilla MySQL 8.0.42 both with and without my plug-in connection handler. I used the BMK-kit found on Oracles community downloads and ran some of the 10million 8 table Pareto distribution tests. These were read only tests that tested the scalability without worry about disk IO (both read and write). What I found was a bit troublesome.

As we can see, Thread Pool Hybrid kept up with connection per thread until somewhere around 64 clients, and then begins to catch up around 1024 clients.

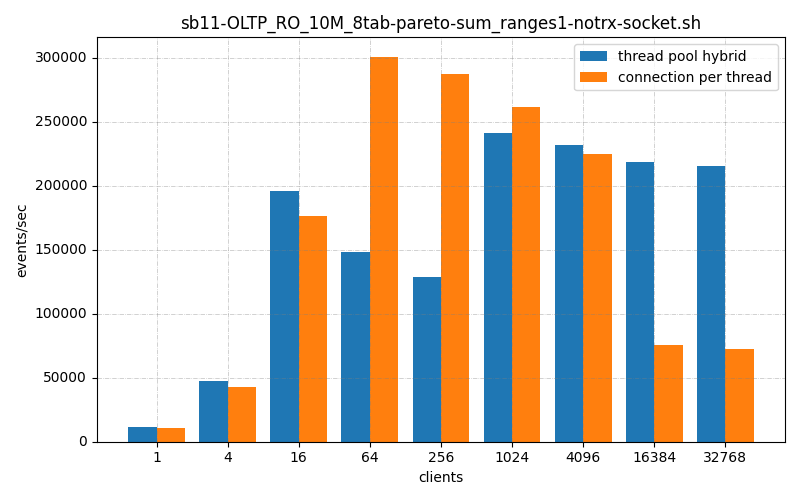

We see similar number with other tests:

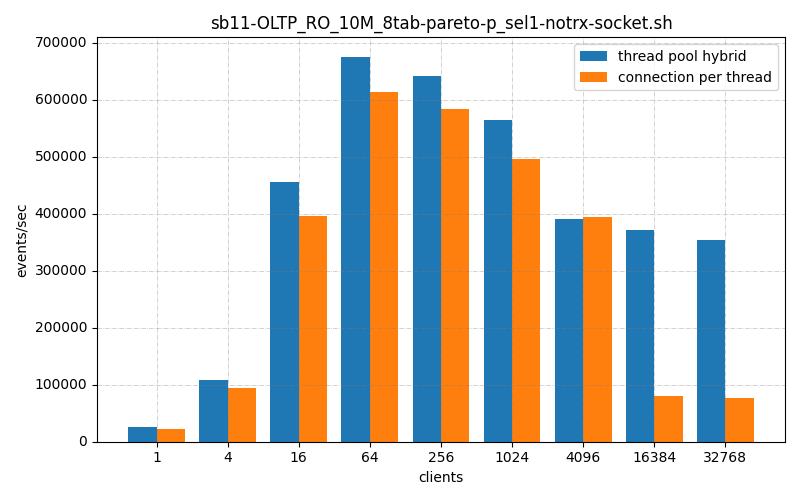

Also

Well this isn’t the home run I was hoping for!

It’s definitely better on the high and, and keeps pace or better on the low end. But from 64 – 1024 clients, it’s lacking.

Enter the HYBRID model!

So the thing is epoll is very scalable on the high end of clients, and on the low end it is very comparable to connection-per-thread, but there is a middle ground where it lacks, badly. Which gave me an idea, why not, until the thread pool has more connections than threads, use regular poll(...), and when one more connection comes in, all the threads waiting on poll instead revert back to epoll_wait(...) ? So with that in mind I created the hybrid thread pool.

And it works beautifully.

Now this is what I’m talking about!

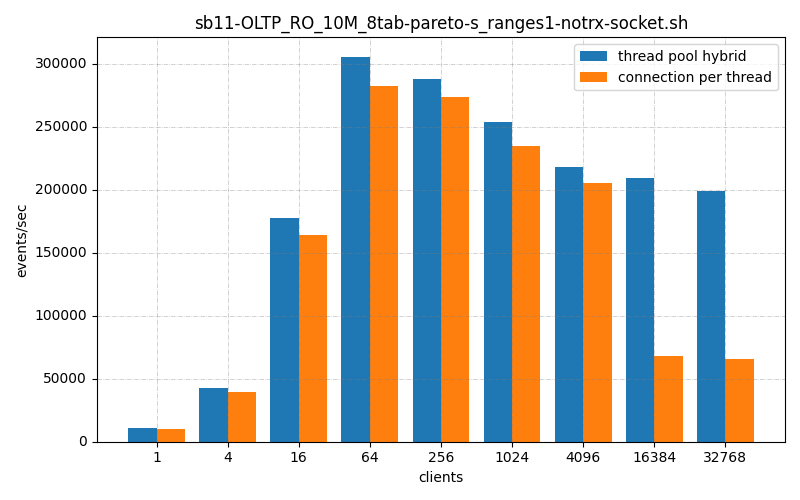

As the connection count move up past the max threads per pool, the thread pool automatically changes from keeping single threads monitoring a connection, to switching to all the waiting threads switch epoll. Threads actively servicing a connection switch will to epoll once they’ve completed their query.

Once a thread is in epoll_wait(...) it can get an event from any connection in that thread pool, so that if the connection count drops down below the maximum threads, it handles the io of the connection and converts to listening to the connection via poll(...). In this way it preserves performance on the low to mid end and scalability on the high end.

Ok, that’s the basic introduction to the thread_pool_hybrid. Feel free to mail to ask me any questions (me@damienkatz.com). Next I will write about some found weirdness and also how you can contribute to this project or to ThrustDB as a whole. Stay tuned…